Survival analysis with knockoffs

Matthias Kormaksson, Kostas Sechidis

May 21, 2026

survival-analysis-with-knockoffs.RmdIntroduction

In this vignette we demonstrate how to do knockoff variable selection in the context of censored time-to-event data. We consider the following Cox regression model for knockoff variable selection for time-to-event data:

where is the hazard function corresponding to a censored time-to-event response and is the baseline hazard. The matrix is the design matrix corresponding to the covariates of interest, while represents a knockoff copy of .

Knockoff variable selection in Cox regression involves the following steps:

- Simulate a knockoff copy of the original covariates data .

- Compute feature statistics from the aggregated Cox regression with covariates and . Large, positive statistics indicate effect of on hazard rate.

- For FDR control use the knockoffs procedure to select variables that fulfill where This workflow selects variables associated with response with guaranteed control of false discovery rate .

Data generation

In this section we will simulate a toy data set with a known truth. In particular, we will simulate a set of covariates and survival times under censoring that are associated with some of the ’s through a Cox regression model.

Simulation of

:

The generate_X function simulates the rows of an

data frame

independently from a multivariate Gaussian distribution with mean

and

covariance matrix

where

randomly selected columns are then dichotomized with the indicator

function

.

# Generate a 2000 x 30 Gaussian data.frame under equi-correlation(rho=0.5) structure,

# with 10 of the columns dichotomized

set.seed(1)

X <- generate_X(n=2000, p=30, p_b=10, cov_type = "cov_equi", rho=0.5)The covariance type is specified with the parameter

cov_type and the correlation coefficient with

rho. Each column of the resulting data.frame is either of

class "numeric" (for the continuous columns) or

"factor" (for the binary columns).

Simulation of event and censoring times: The function

simulWeib simulates survival times

from a Cox regression model

with Weibull baseline hazard:

where

and

are scale and shape parameters, respectively. The censoring times

are randomly drawn from an exponential distribution with a small (fixed)

rate

,

which results in very mild censoring. Once

and

have been simulated the function sets time = min(T, C) and

event = 1{T < C}:

simulWeib <- function(N, lambda0, rho, lp) {

lambdaC = 5e-04

v <- runif(n = N)

Tlat <- (-log(v)/(lambda0 * exp(lp)))^(1/rho)

C <- rexp(n = N, rate = lambdaC)

time <- pmin(Tlat, C)

status <- as.numeric(Tlat <= C)

survival::Surv(time = time, event = status)

}In this toy data set we assume that the first five covariates affect the hazard through the linear predictor and then simulate the survival object:

lp <- generate_lp(X, p_nn=5, a=2)

set.seed(2)

y <- simulWeib(N=2000, lambda = 0.01, rho = 1, lp = lp)Knockoff (feature) statistics in Cox regression

Let’s now use the knockoff.statistics function to (a)

simulate independently

knockoffs

,

,

(b) fit the aggregated Cox model to above data:

for each of the

knockoff copies. Then finally calculate (for each knockoff

)

the corresponding knockoff (feature) statistics

.

set.seed(123)

W <- knockoff.statistics(y, X, type="survival", M=10)

head(W)

#> W1 W2 W3 W4 W5 W6

#> X1 1.74385575 1.688399422 1.72585475 1.74029139 1.7234531 1.725687035

#> X2 1.82294465 1.762800761 1.77717388 1.82853736 1.8040355 1.848307333

#> X3 1.79006418 1.731865250 1.76590859 1.78565227 1.7681818 1.816228377

#> X4 1.76204064 1.691894366 1.72672748 1.73142901 1.7408079 1.777589645

#> X5 1.80203975 1.734332717 1.77405548 1.78675847 1.7793463 1.786158683

#> X6 0.01294729 -0.004468954 -0.01579702 -0.03514107 -0.0258282 -0.001140941

#> W7 W8 W9 W10

#> X1 1.75022900 1.70711399 1.72540564 1.70512312

#> X2 1.80582574 1.77146158 1.81769534 1.78099929

#> X3 1.76818033 1.75156179 1.74726089 1.74963685

#> X4 1.73046674 1.72357287 1.75925751 1.72126777

#> X5 1.81131739 1.75966621 1.80142519 1.76013210

#> X6 0.01891569 0.01083217 0.01792927 0.01324787The output is a data.frame whose columns are the

knockoff statistics. In the above we have used (as a default) parallel

computing via the package clustermq (see: User

Guide - clustermq).

Variable selection via multiple knockoffs

We simulated

feature statistics in order to evaluate robustness of the knockoff

variable selection process, each time simulating a new knockoff of

X. To calculate which variables will be selected for each

of these knockoff statistics we use the variable.selections

function. This function takes the knockoff statistics W as

input and additionally specifies the error.type="fdr"

(default), "pfer" (per family error error), or

"kfwer" (k-familywise error rate) and the corresponding

target error level.

S = variable.selections(W, level = 0.2, error.type="fdr")

head(S$selected)

#> S1 S2 S3 S4 S5 S6 S7 S8 S9 S10

#> X1 1 1 1 1 1 1 1 1 1 1

#> X2 1 1 1 1 1 1 1 1 1 1

#> X3 1 1 1 1 1 1 1 1 1 1

#> X4 1 1 1 1 1 1 1 1 1 1

#> X5 1 1 1 1 1 1 1 1 1 1

#> X6 0 0 0 0 0 0 0 0 0 0

S$stable.variables

#> [1] "X1" "X2" "X3" "X4" "X5" "X23"In a nutshell the function will both calculate individual variable

selections (for each knockoff replicate) and a stable set of variables

that are selected most frequently among the M replicates.

For FDR-control the stable variables are calculated using the heuristics

in The multiple knockoffs

filter procedure, while for the other two error types

("pfer" and "kfwer") we simply choose all

variables selected more than thres*M times, where

thres is an optional parameter of the

variable.selections function (thres=0.5 by

default).

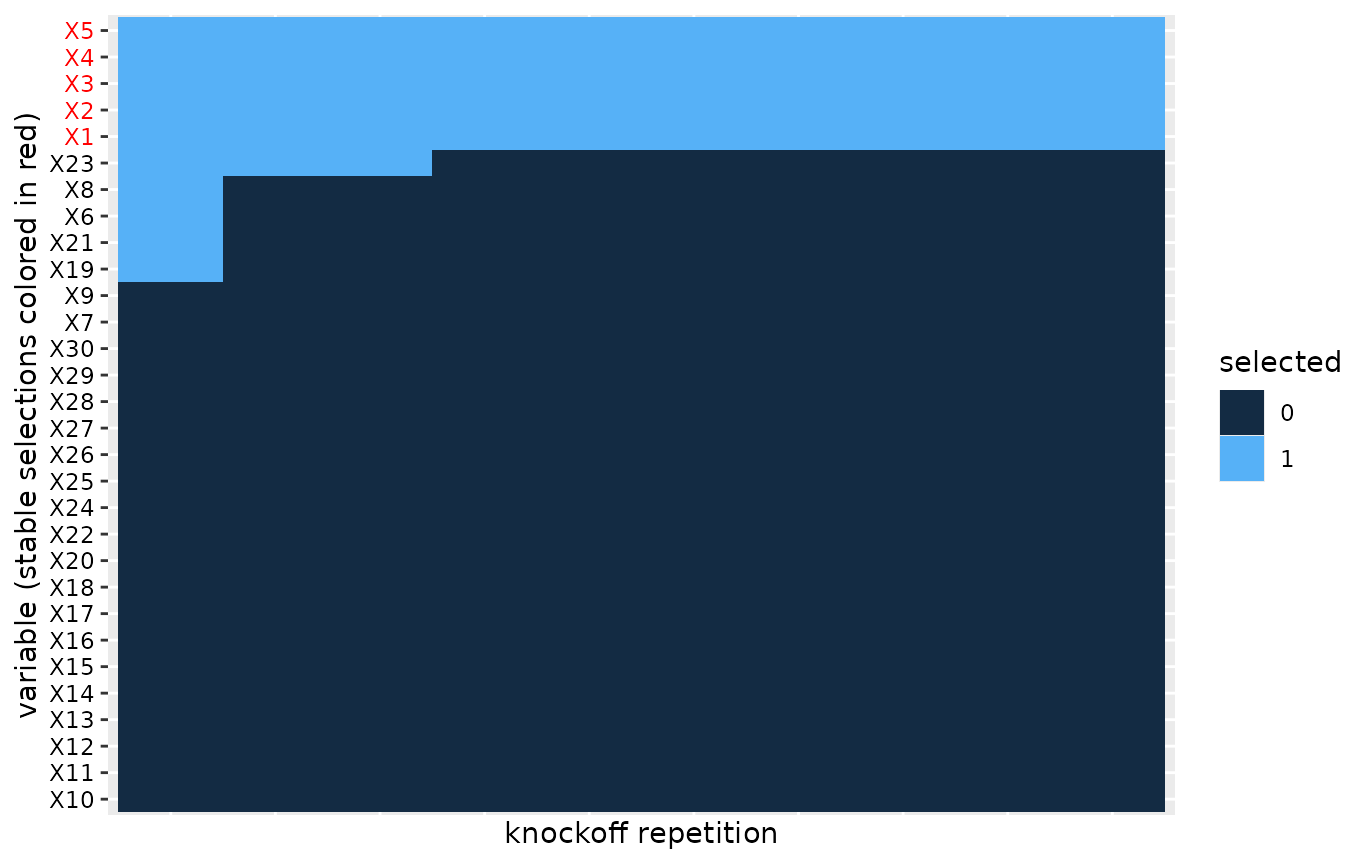

Heatmap of multiple variable selections

In order to evaluate the robustness of the knockoff selection procedure we can visualize a heatmap of the selections across the knockoff replicates.

plot(S)

#> Warning: Vectorized input to `element_text()` is not officially supported.

#> ℹ Results may be unexpected or may change in future versions of ggplot2. We apply co-clustering of the rows and columns of the heatmap, which

identifies blocks of similarities in the binary selections. We then

order the blocks according to increasing mean number of selections. This

helps with aesthetics, but also helps visualize the most important

variables that should tend towards the top of the heatmap.

We apply co-clustering of the rows and columns of the heatmap, which

identifies blocks of similarities in the binary selections. We then

order the blocks according to increasing mean number of selections. This

helps with aesthetics, but also helps visualize the most important

variables that should tend towards the top of the heatmap.

Appendix

A. The multiple knockoffs filter procedure for FDR-control

(Kormaksson et al. 2021) introduced a heuristic algorithm for selecting stable variables from multiple independent knockoff variable selections.

Let denote independent knockoff copies of . For each knockoff copy run the knockoff filter and select the set of influential variables, . We propose the following heuristics to select a final set of variables:

- Let , where , denote the set of variables selected more than times out of the knockoff draws.

- Let $S(r)=\underset{b}{\rm mode}\{F(r) \cap S_b\}$ denote the set of selected variables that appears most frequently, after filtering out variables that are not in .

- Return , where , i.e. the largest set among .

The first step above essentially filters out variables that don’t appear more than of the time, which would seem like a reasonable requirement in practice (e.g. with ). The second step above then filters the knockoff selections accordingly and searches for the most frequent variable set among those. The third step then establishes the final selection, namely the most liberal variable selection among the sets .